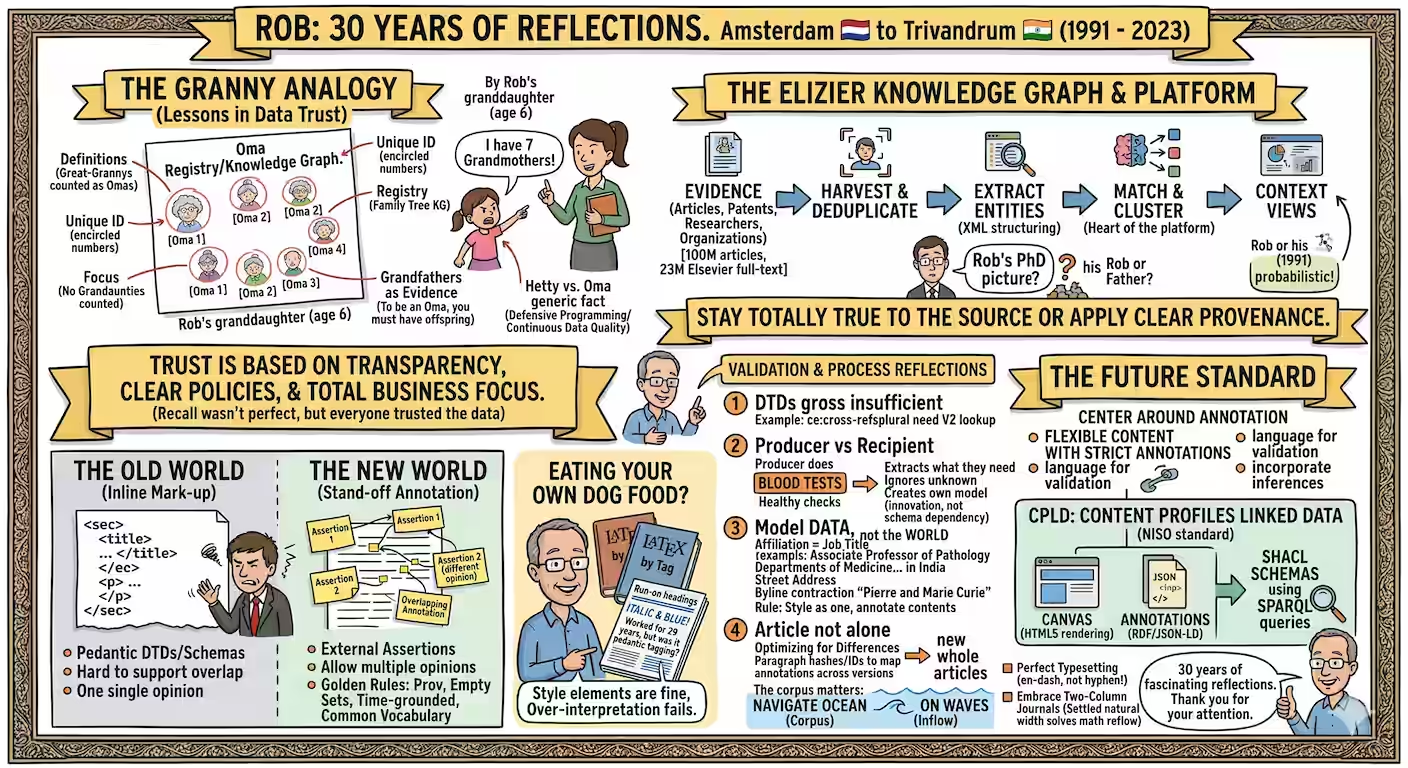

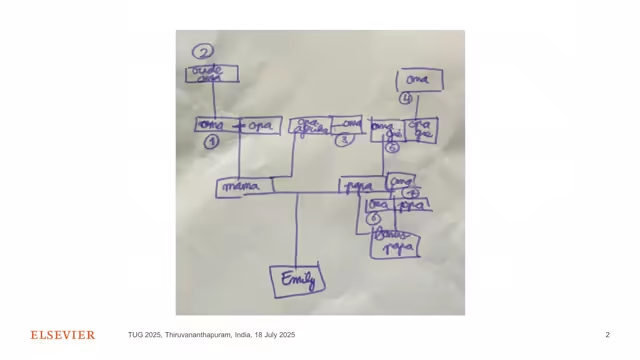

There is a drawing by a six-year-old girl in Amsterdam that contains more wisdom about data quality than three decades of enterprise content architecture. In it, seven figures stand in a family tree — each grandmother numbered with a circle, each grandfather conspicuously absent. The girl is Rob Schrauwen's granddaughter. She was asked to prove she had seven grandmothers; she drew them all, labeled them carefully, and in doing so invented continuous data qualityThe practice of ensuring data remains accurate, consistent, and trustworthy over time — not just at the moment of entry. Rob's granddaughter used a generic label ("Oma") when unsure of a spelling, rather than risking a wrong name that would discredit the entire record., unique identifiers, and a knowledge graph — all before she learned to spell "Hetty."

"I am here next to Oma one, that's me, and my mother is missing," Rob told the audience at the TeX Users Group conference in Kerala in July 2025. "So the recall is only seven divided by eight. But that doesn't matter, because everybody got to trust in the data. And this trust is based on transparency, clear policies, and total focus on the business problem at hand."

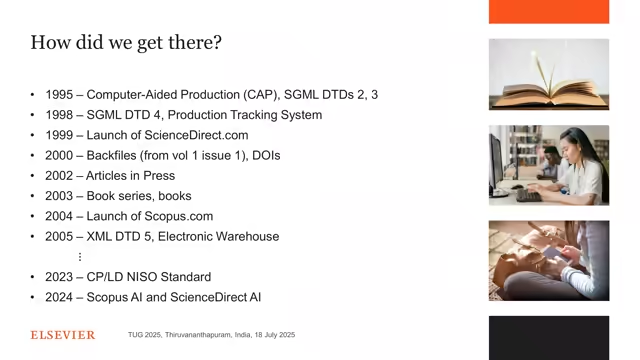

Rob has been at Elsevier since 1991. He joined as a mathematician, became Chief Content Architect, and has spent thirty years thinking about what it means to represent knowledge in a machine-readable form. His talk — titled, provocatively, True but Irrelevant — was a quarter-century of reflections on scientific content standards. It was also, quietly, one of the most honest talks about the failures of enterprise data architecture ever delivered at a technical conference.

The Knowledge Graph and the Grandmothers

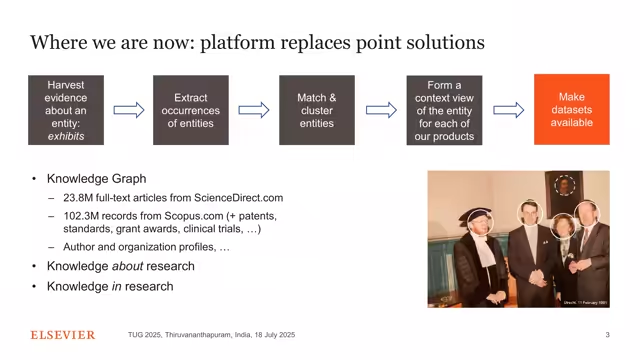

The Elseviers knowledge graph is not made of grandmothers. But it operates on the same principles. It contains a hundred million articles from other publishers, twenty-three million full-text articles from Elsevier itself, a hundred million patents, forty million researchers, a hundred thousand organizations — all carefully curated, all connected to each other. The nodes are entities; the edges are relationships. The whole thing is, Rob said, "our most important asset."

But here is what is interesting about this graph: it is built through a process that is fundamentally probabilistic. Rob showed the audience a photograph from his PhD defense — a picture in which multiple figures appear in a painting behind him, some of which an algorithm might识别 as faces (entities) and some of which are not. "All these things are heavily probabilistic," he said. "The head on the painting — is that also an entity or not? The algorithm could be wrong."

"We need to be totally true to the source. We don't know what this lookup of PubMed is. I can only accept a lookup if there is really clear measurement of the precision and recall of the lookup itself." — Rob Schrauwen, TUG 2025

And this is where the granddaughter story becomes less cute and more profound. She had a clear policy: count great-grandmothers as grandmothers. She issued unique identifiers. She provided evidence for every assertion. She fell back to a generic label rather than risk a wrong one. And crucially, her record of seven grandmothers was imperfect — it was only 87.5% complete — but everyone trusted it anyway. Because trust, Rob argues, is not about perfection. It is about transparency.

The Five-Step Data Platform

Markup vs. Annotation: The Sticky Note Revolution

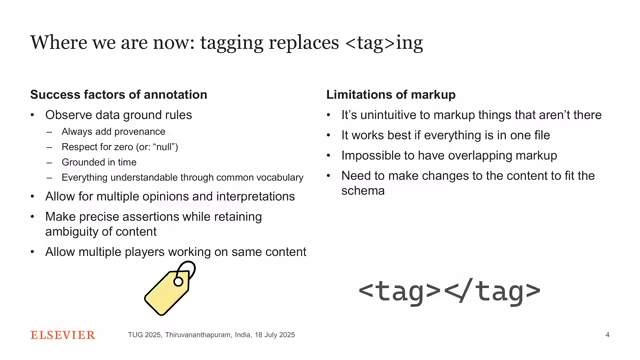

For most of the past thirty years, the publishing industry has operated on a principle Rob calls markup: taking a document and tagging its parts with structural labels. Title goes in a <title> tag. Author name goes in an <author> tag. This is inline markup — the annotations are embedded within the document itself.

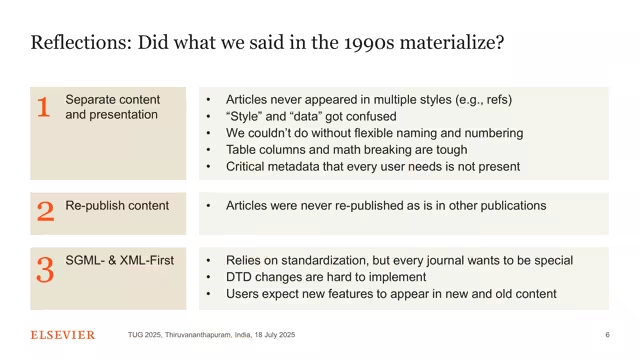

Rob's central argument — the one he returned to again and again across his thirty reflections — is that this model is fundamentally broken. Not because it was wrong, but because it was true but irrelevant: it solved a real problem in a world that no longer exists.

"Annotation means making assertions about the document at hand. We do those assertions in RDF, in the Resource Descriptor Format. We moved from markup to annotation." — Rob Schrauwen

The problem with inline markup is that it can only represent one view of reality. The author's view, OR the publisher's view, OR the typesetter's view — but not all three at once. It makes it nearly impossible to annotate things that aren't there. And it destroys provenance: when you replace a value with a "corrected" one, you lose the original.

Stand-off annotation is different. The annotations live outside the document, in a separate layer. The document stays as it is — faithful to the source — while assertions accumulate around it like sticky notes on a whiteboard. Multiple people can add multiple opinions about the same passage. You can annotate things that aren't there. You can add provenance to every single claim.

"Annotations are even more tagging than tagging. We have been using the word 'tag' for an XML tag with angle brackets. But a tag that you stick onto it like a sticky note is far more like a tag than an XML tag. So I say: we go from tagging to real tagging."

Thirty Years of Dogma

Rob's reflections on the DTD — the Document Type Definition, the schema that defined what was allowed in an Elsevier article — are a masterclass in the gap between theoretical purity and practical reality.

Consider emphasis1 through emphasis9. In the 1980s, the architects of Elsevier's DTD were concerned that authors might one day want to swap italic and bold in their articles — so they created nine levels of emphasis, purely semantic, not tied to any specific rendering. "Nobody would really accept that the bold and italic would swap around in their article," Rob said. "It's just a silly thought." And yet they built an entire tagging system around it. To add an interpretation can only go wrong.Rob's key lesson from the emphasis1-9 experience: when you layer interpretation on top of presentation, you create a system that neither machines nor humans can reliably process. Better to mark what IS (italic/bold) and annotate separately what it MEANS.

Or consider the CE float anchor — the tag that indicates where a figure should approximately appear in a printed article. It was put in because copy editors used to draw a red circle around figure numbers on paper proofs. But the DTD had nothing equivalent for table column width, because table column behavior was "the total remit of the typesetter." "And now we see that's really a problem," Rob said. "And people in Elsevier have been told, 'Oh, now we have LLMs, maybe we can automate it.' Maybe for 20 years, 30 years, people have been trying to automate it. But what they should have seen is that the DTD was wrong all along."

"If you create a LaTeX file, that you would create an HTML file and a PDF file in one go and they are more or less the same. We can achieve that, then we are there." — Rob Schrauwen

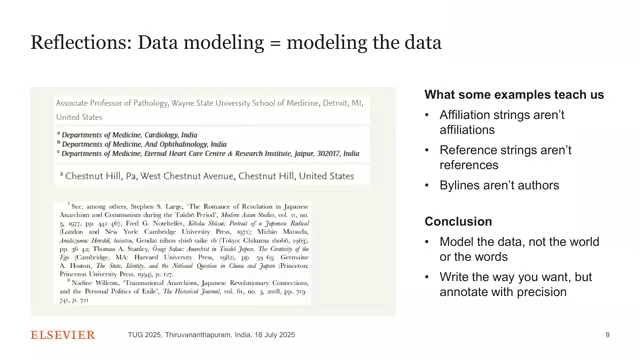

Modeling the Data, Not the Words

One of the most entertaining sections of Rob's talk involved what happens when you try to force real author-supplied data into theoretically pure data models. He showed examples from actual articles: an affiliation that said "Associate Professor of Pathology" — which is a job title, not an affiliation. Another that said "Departments of Medicine and Cardiology in India" — which is two departments and a country, but gives no institution name. A third that was just a street address.

"If you are a data modeler, you're deeply shocked about that," Rob said. "At least if you do, if you model the words. But I don't model the words, I model the data. And this is what people do, so let them."

"Modeling the data is modeling the data. That is it." — Rob Schrauwen

The same applies to references. The DTD assumed a reference was a single one-to-one entity. But in real articles, references appear in sentences like "Among others, several studies have shown this (Smith 2020, Jones 2021, Kumar 2019)." That's three references in one sentence, and the model had no way to represent it. "I think it's really hard to deal with this because somebody thought that the reference is always one-to-one," Rob said. "I think it's really another proof why we want to annotate."

The Ocean and the Waves

Rob's most poetic moment came when he described the relationship between the individual article and the entire corpus. He called it the ocean and the waves.

"The inflow are the waves," he said. "To navigate the ocean on the waves is already very hard. There's storms, there is high waves, ships sink. I mean that's the process that many people are on. But underneath is four kilometers of water mass. That is my corpus. All the millions of articles that are out there. And they need to be correct too. They need to be constantly refreshed because they can't go stale."

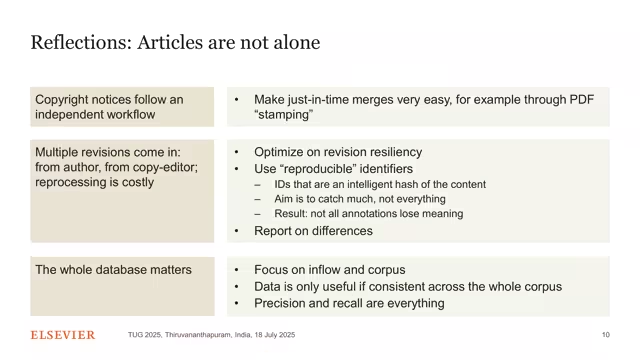

A new data point is only valuable if it is useful across the corpus. If you discover that a certain chemical compound is associated with a certain disease in one article, that discovery is scientifically meaningful only if you can verify it consistently across thousands of other articles. "Precision, precision, and recall are everything," Rob said. "We need to be very precise."

He also introduced the concept of reproducible identifiersA hash of the content itself — a paragraph ID generated from the text of the paragraph. If any character changes (even a comma), the hash changes. This means you can detect whether any given article has been modified since your annotations were made, without needing a centralized version control system. — essentially content-addressable hashes for paragraphs. If a paragraph changes even by a comma, its hash changes. This means annotations can remain valid across versions: if the annotated paragraph is unchanged in a new version, the annotation still applies. If it changed, you recompute. But you don't recompute what wasn't legally necessary to recompute.

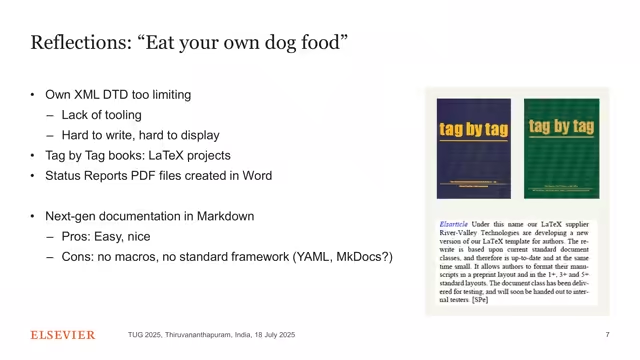

Eating Your Own Dog Food

Rob made a confession that few senior executives would make at a technical conference: "I have never eaten my own dog food." He was Chief Content Architect of Elsevier. He was responsible for the Elsevier DTD. He wrote two books documenting it — 444 pages, tag by tag. And he wrote them in LaTeX, not in his own XML format.

"I have this book and so I think there is no tooling that is widely available. It's really hard to write these things. Oh right, should I write all my angle brackets in Notepad++? I don't know, I have no tooling." — Rob Schrauwen

He also pointed out that the Elsevier newsletter — the one he sent to thousands of subscribers every year — had third-order headings that were coded not as headings in Microsoft Word, but as "italic and blue." And it worked perfectly. "Maybe this is somewhat overrated," he said, with the gentle self-deprecation of someone who has spent decades enforcing standards he himself couldn't live by.

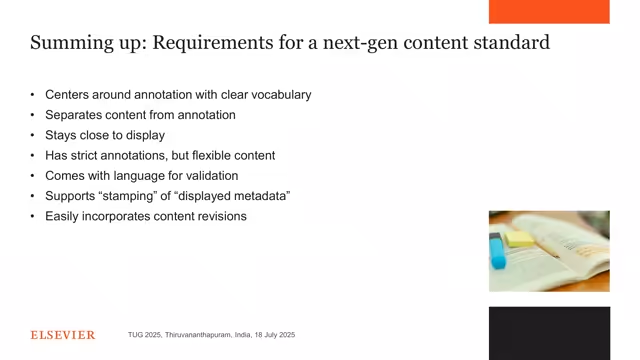

The CPLD Standard: Where It All Leads

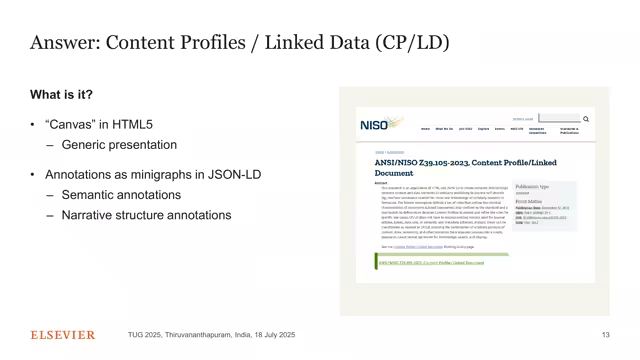

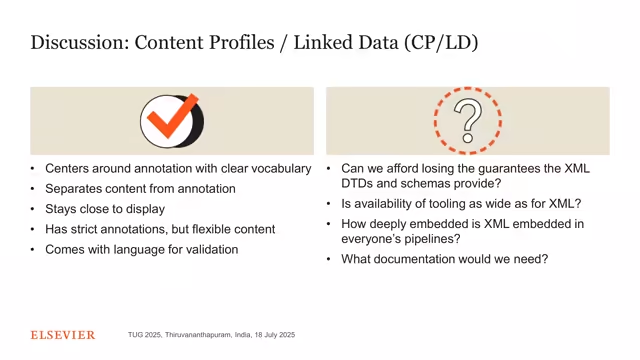

After thirty reflections on what went wrong, Rob arrived at what he believes is the answer: a NISO standard called Content Profiles Linked Data (CPLD). He called it "the only alternative I currently have in mind."

CPLD has two components. The first is Canvas — HTML5 rendering. "Leave the HTML alone," Rob said. "Don't try to make it into a new DTD by adding a million nested divs." The second is annotations in JSON-LD (a Linked Data format), validated using SHACL schemas rather than DTDs.

DTDs cannot express cross-referential constraints — like "all cross-ref destinations must have labels." SHACL schemas can run SPARQL queries within the graph, making complex multi-part validations possible in a standard language rather than homegrown tooling.

"We don't do that with DTDs anymore, we do that with SHACL schemas in the JSON-LD. We let the HTML do what it is. Don't get excited about putting even more nested divs into the HTML file. That's what many people do. Div, div, div... and you might as well create a new DTD." — Rob Schrauwen

And in a SHACL schema, you can make SPARQL queries. Rob's example: check that all cross-ref destinations have labels before pushing to CrossRef. "Now that is so beautiful," he said. "You can do a SPARQL query, so my test about the cross-ref can be done there. You just check — do all the destinations really have a label? Yes. Then we can push it onto CrossRef."

AI and the Knowledge Graph

During Q&A, someone asked about AI — specifically, how does CPLD help when LLMs want to summarize articles, not read them whole?

Rob's answer was nuanced. Elsevier uses RAG (Retrieval Augmented Generation)A technique where an LLM is given relevant context from a knowledge base (in this case, the Elsevier corpus) to answer questions about it. The LLM generates responses grounded in actual retrieved text rather than purely from its training data. — giving LLMs relevant context from their own articles before generating answers. But there's a problem: scientific articles contain math and specialized concepts that LLMs routinely misinterpret, leading to hallucinations. "To prevent that, the text must be extremely high quality," Rob said. "Actually, what is now our biggest worry is that all the things that we want to do is present the information inside those abstracts."

The long game, then, is not replacing the knowledge graph with AI, but using the knowledge graph to make AI trustworthy. "We can make a difference thanks to the large knowledge graphs that we have," Rob said. "Open access content is easy to get. The only way we can [compete] is to make sure that there are so many issues in the data that we don't have, thanks to 20 years of the kind of processing that helped for us."

The Conversation That Should Have Happened

One of the most fascinating audience questions involved multilingual article formatting — how do you tag "page 3 of 10" when some languages put the total first and others put the current page first? Rob's answer got at the heart of his argument: don't try to capture this in the structure. "We have the text in its textual form," he said. "Don't even try to capture it. It just says it in the other way around or however it is in that language. But now you point at it. That is the whole thing about annotation. You point at it, and that assertion is in our standard vocabulary. It says it is page 3 or something like that. So there that is not in a language, that is in the annotation language."

This — separating content from annotation, structure from assertion — is the through-line of Rob's entire talk. It is the lesson his granddaughter intuitively understood when she drew seven grandmothers and labeled them. It is the lesson the XML community spent thirty years learning and then forgetting and then relearning. It is true. And it is, as the title says, only now becoming relevant.

Slides: PDF on TUG2025 website · License: TUG2025 abstract page